3. Architecture¶

The Python code is highly modular, with testability in mind. so that specific parts can run in isolation. This facilitates studying tough issues, such as, double-precision reproducibility, boundary conditions, comparison of numeric outputs, and studying the code in sub-routines.

Tip

Run test-cases with pytest command.

3.1. Data Structures:¶

Computations are vectorial, based on hierarchical dataframes, all of them stored in a single structure, the datamodel. In case the computation breaks, you can still retrive all intermediate results till that point.

Todo

Almost all of the names of the datamodel and formulae can be remapped,

For instance, it is possible to run the tool on data containing n_idling_speed

instead of n_idle (which is the default), without renaming the input data.

- mdl

- datamodel

The container of all the scalar Input & Output values, the WLTC constants factors, and 3 matrices: WOT, gwots, and the cycle run time series.

It is composed by a stack of mergeable JSON-schema abiding trees of string, numbers & pandas objects, formed with python sequences & dictionaries, and URI-references. It is implemented in

datamodel, supported bypandalone.pandata.Pandel.- WOT

- Full Load Curve

- An input array/dict/dataframe with the full load power curves for (at least) 2 columns for

(n, p)or their normalized values(n_norm, p_norm). See also https://en.wikipedia.org/wiki/Wide_open_throttle - gwots

- Grid WOTs

A dataframe produced from WOT for all gear-ratios, indexed by a grid of rounded velocities, and with 2-level columns

(item, gear). It is generated byinterpolate_wot_on_v_grid(), and augmented bycalc_p_avail_in_gwots()&calc_road_load_power().Todo

Move Grid WOTs code in own module

gwots.- cycle

- Cycle run

- A dataframe with all the time-series, indexed by the time of the samples.

The velocities for each time-sample must exist in the gwots.

The columns are the same 2-level columns like gwots.

it is implemented in

cycler.

3.2. Code Structure:¶

The computation code is roughly divided in these python modules:

- formulae

Physics and engineering code, implemented in modules:

enginevmaxdownscalevehicle

- - orchestration

The code producing the actual gear-shifting, implemented in modules:

datamodelcyclergridwots(TODO)scheduler(TODO)experiment(TO BE DROPPED,datamodelwill assume all functionality)

- scheduler

- (TODO) The internal software component which decides which formulae to execute based on given inputs and requested outputs.

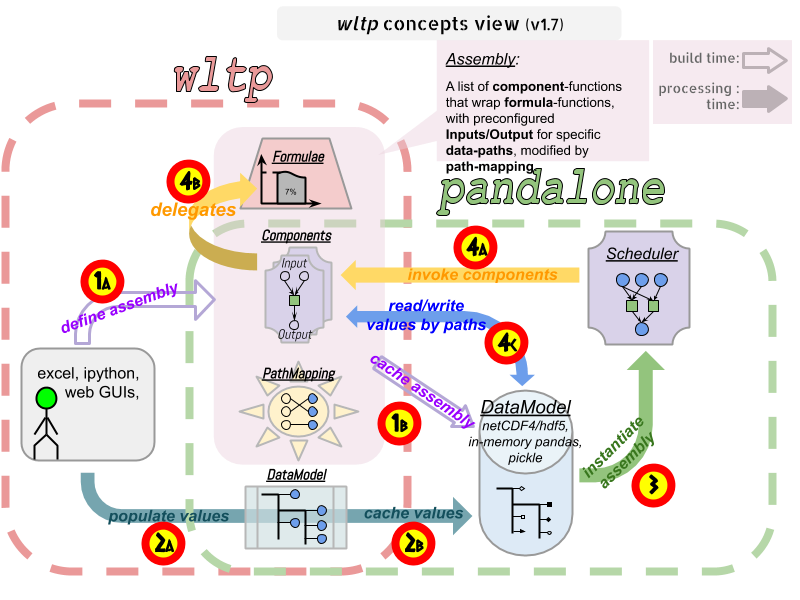

The blueprint for the underlying software ideas is given with this diagram:

Note that currently there is no scheduler component, which will allow to execute the tool with a varying list of available inputs & required data, and automatically compute only what is not already given.

3.3. Specs & Algorithm¶

This program imitates to some degree the MS Access DB (as of July 2019),

following this 08.07.2019_HS rev2_23072019 GTR specification

(docs/_static/WLTP-GS-TF-41 GTR 15 annex 1 and annex 2 08.07.2019_HS rev2_23072019.docx,

included in the docs/_static folder).

Note

There is a distinctive difference between this implementation and the AccDB:

All computations are vectorial, meaning that all intermediate results are calculated & stored, for all time sample-points, and not just the side of the conditions that evaluate to true on each sample.

The latest official version of this GTR, along with other related documents maybe found at UNECE’s site: